写在前面

二进制部署方式虽然相对复杂,但它能让我们深入了解Kubernetes各个组件的工作原理、组件间的通信机制以及整个集群的启动流程。这种"硬核"的部署方式不仅能够提升我们对K8S架构的理解深度,更能在生产环境中遇到复杂问题时,为我们提供更强的故障排查和性能调优能力。本文将从零开始,详细介绍如何通过二进制方式搭建一个高可用的Kubernetes集群。我们将涵盖从环境准备、证书生成、组件配置到集群验证的完整流程,并分享在实际部署过程中的经验和踩坑心得。无论你是希望深入理解K8S架构的技术爱好者,还是需要在生产环境中实现高度定制化部署的运维工程师,这篇文章都将为你提供有价值的参考。

内容概述:

环境规划

初始化

搭建etcd集群

安装kubernetes组件

安装网络插件

测试K8S集群

安装Keepalived和Nginx 实现高可用

1. 环境规划

操作系统: CentOS 7.9

配置:4vCPU/4G内存

网络模式:NAT模式

主机网络: 10.0.0.0/24

Pod网络: 172.16.0.0/16

Service网络: 192.168.0.0/16

2. 初始化

2.1 配置静态ip,把虚拟机配置成静态ip服务器重启后ip地址才不会变化

## 这里以k8s-master01为例子

--- 1. 查看网卡的配置文件

[root@k8s-master01 ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth0 ## 如果你的网卡可能是ens33

TYPE="Ethernet"

PROXY_METHOD="none"

BROWSER_ONLY="no"

BOOTPROTO="none"

DEFROUTE="yes"

IPV4_FAILURE_FATAL="no"

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

IPV6_DEFROUTE="yes"

IPV6_FAILURE_FATAL="no"

IPV6_ADDR_GEN_MODE="stable-privacy"

NAME="eth0" ## 网卡名字,跟 DEVICE 名字保持一致即可

UUID="e2a986aa-3a40-4e8d-9ad2-9a03206356bf"

DEVICE="eth0"

ONBOOT="yes" ## 开机自启动网络,必须是 yes

IPADDR="10.0.0.161" ## IP地址

PREFIX="24" ## 子网掩码可以是255.255.255.0

GATEWAY="10.0.0.2" ## 网关

DNS1="223.5.5.5" ## DNS1

DNS2="223.6.6.6"

IPV6_PRIVACY="no"

--- 2. 重启网卡才能生效

[root@k8s-master01 ~]# systemctl restart network2.2 配置主机名

## 在 10.0.0.161 上执行如下:

[root@k8s-master01 ~]# hostnamectl set-hostname k8s-master01

## ## 在 10.0.0.162 上执行如下:

[root@k8s-master02 ~]# hostnamectl set-hostname k8s-master02

## 在 10.0.0.163 上执行如下:

[root@k8s-master03 ~]# hostnamectl set-hostname k8s-master03

## 在 10.0.0.164 上执行如下:

[root@k8s-node01 ~]# hostnamectl set-hostname k8s-node012.3 配置hosts文件

## 在所有主机上运行,确保各主机之间可以互相访问

[root@k8s-master01 ~]# cat >> /etc/hosts << EOF

10.0.0.161 k8s-master01

10.0.0.162 k8s-master02

10.0.0.163 k8s-master03

10.0.0.164 k8s-node01

EOF2.4 配置主机密钥

## 为了方便每台主机可以无密码登录,需要在每一台主机上执行

--- 1. 创建ssh 密钥对

[root@k8s-master01 ~]# ssh-keygen -t rsa ## 一路回车即可

--- 2. 把本地的ssh 公钥文件安装到远程主机对应的账户下

[root@k8s-master01 ~]# ssh-copy-id -i /root/.ssh/id_rsa.pub k8s-master01

[root@k8s-master01 ~]# ssh-copy-id -i /root/.ssh/id_rsa.pub k8s-master02

[root@k8s-master01 ~]# ssh-copy-id -i /root/.ssh/id_rsa.pub k8s-master03

[root@k8s-master01 ~]# ssh-copy-id -i /root/.ssh/id_rsa.pub k8s-node012.5 关闭并禁用防火墙

## 在所有主机上执行

[root@k8s-master01 ~]# systemctl stop firewalld

[root@k8s-master01 ~]# systemctl disable firewalld2.6 关闭selinux

## 在所有主机上执行

[root@k8s-master01 ~]# sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

## 修改 selinux 配置文件之后,重启机器,selinux 配置才能永久生效

## 重启之后登录服务器验证是否修改成功

[root@k8s-master01 ~]# getenforce

## 显示Disabled 表示修改成功2.7 关闭swap交换分区

## 在所有主机上执行

--- 1. 临时关闭

[root@k8s-master01 ~]# swapoff -a

--- 2. 永久关闭,注释 swap 挂载,给 swap 这行开头加一下注释

[root@k8s-master01 ~]# vim /etc/fstab

#/dev/mapper/centos-swap swap swap defaults 0 02.8 修改内核参数

## 在所有主机上执行

--- 1. 加载 br_netfilter 模块

[root@k8s-master01 ~]# modprobe br_netfilter

--- 2. 验证模块是否加载成功

[root@k8s-master01 ~]# lsmod |grep br_netfilter

br_netfilter 22256 0

bridge 155432 1 br_netfilter

--- 3. 修改内核参数

[root@k8s-master01 ~]# cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

--- 4. 使刚才的内核参数生效

[root@k8s-master01 ~]# sysctl -p /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

问题1:sysctl 是干啥的?

在运行时配置内核参数

-p 从指定的文件加载系统参数,如不指定即从/etc/sysctl.conf 中加载

问题2:为啥要执行 modprobe br_netfilter

主要是加载 Linux 内核的桥接网络过滤模块,如果不执行的话sysctl -p /etc/sysctl.d/k8s.conf 可能就会报错

问题3:net.ipv4.ip_forward 是啥?

出于安全考虑,Linux 系统默认是禁止数据包转发的,要让 Linux 系统具有路由转发功能,需要配置一个 Linux 的内核参数 net.ipv4.ip_forward。这个参数指定了 Linux 系统当前对路由转发功能的支持情况;其值为 0 时表示禁止进行 IP 转发;如果是 1,则说明 IP 转发功能已经打开。

2.9 配置阿里云镜像源

## 在所有主机上执行

--- 1. 备份

[root@k8s-master01 ~]# mv /etc/yum.repos.d/CentOS-Base.repo /etc/yum.repos.d/CentOS-Base.repo.backup

--- 2. 配置阿里云的repo源

[root@k8s-master01 ~]# wget -O /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

或者

[root@k8s-master01 ~]# curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

--- 3. 配置阿里云的docker 源

## 安装必要的一些系统工具

[root@k8s-master01 ~] yum install -y yum-utils

## 添加软件源信息

[root@k8s-master01 ~] yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

## 安装docker

[root@k8s-master01 ~] yum install docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin

##安装指定版本的Docker-CE:

# Step 1: 查找Docker-CE的版本:

# yum list docker-ce.x86_64 --showduplicates | sort -r

# Loading mirror speeds from cached hostfile

# Loaded plugins: branch, fastestmirror, langpacks

# docker-ce.x86_64 17.03.1.ce-1.el7.centos docker-ce-stable

# docker-ce.x86_64 17.03.1.ce-1.el7.centos @docker-ce-stable

# docker-ce.x86_64 17.03.0.ce-1.el7.centos docker-ce-stable

# Available Packages

# Step2: 安装指定版本的Docker-CE: (VERSION例如上面的17.03.0.ce.1-1.el7.centos)

# yum -y install docker-ce-[VERSION]

--- 4. 配置docker 镜像加速器

[root@k8s-master01 ~]# cat /etc/docker/daemon.json

{

"registry-mirrors":["https://docker.xuanyuan.me","https://docker.1ms.run"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

--- 5. 加载docker 配置

[root@k8s-master01 ~]# systemctl daemon-reload

--- 6. 启动docker

[root@k8s-master01 ~]# systemctl start docker

问题1:在配置文件中 native.cgroupdriver=systemd 是干啥的?

修改 docker 文件驱动为 systemd,默认为 cgroupfs,kubelet 默认使用 systemd,两者必须一致才可以

2.10 配置时间同步

## 在所有主机上执行

--- 1. 安装时间同步工具

[root@k8s-master01 ~] yum install ntpdate -y

--- 2. 定时任务

[root@k8s-master01 ~]# crontab -e

## 同步时间

*/2 * * * * /sbin/ntpdate ntp1.aliyun.com &>/dev/null

2.11 安装iptables

## 在所有主机上执行

--- 1. 安装iptables

[root@k8s-master01 ~]# yum install -y iptables

--- 2. 禁用iptables

[root@k8s-master01 ~]# systemctl stop iptables && systemctl disable iptables

--- 3. 清空防火墙规则

[root@k8s-master01 ~]# iptables -F

2.12 开启ipvs

## 在所有主机上执行

## 不开启 ipvs 将会使用 iptables 进行数据包转发,但是效率低,所以官网推荐需要开通 ipvs。

--- 1. 书写ipvs 脚本

[root@k8s-master03 ~]# cat /etc/modules-load.d/ipvs.conf

#!/bin/bash

ipvs_modules="ip_vs ip_vs_lc ip_vs_wlc ip_vs_rr ip_vs_wrr ip_vs_lblc ip_vs_lblcr ip_vs_dh ip_vs_sh ip_vs_nq ip_vs_sed ip_vs_ftp nf_conntrack"

for kernel_module in ${ipvs_modules}; do

/sbin/modinfo -F filename ${kernel_module} > /dev/null 2>&1

if [ 0 -eq 0 ]; then

/sbin/modprobe ${kernel_module}

fi

done

--- 2. 执行脚本

[root@k8s-master03 ~]# chmod 755 /etc/modules-load.d/ipvs.conf && bash /etc/modules-load.d/ipvs.conf

--- 3. 查看结果

[root@k8s-master03 ~]# lsmod |grep ip_vs

ip_vs_ftp 13079 0

ip_vs_sed 12519 0

ip_vs_nq 12516 0

ip_vs_sh 12688 0

ip_vs_dh 12688 0

...

...3. 搭建etcd 集群

etcd软件包可以自行下载或者使用我的软件包:etcd-v3.4.13

## 在主机 k8s-master01,k8s-master02,k8s-master03 上执行,当然也可以在mster01上生成相关配置用scp 传到其他主机上

--- 1. 配置etcd 的工作目录

[root@k8s-master01 ~]# mkdir -p /etc/etcd

[root@k8s-master01 ~]# mkdir -p /etc/etcd/ssl

--- 2. 安装签发证书cfssl

## 创建工作目录

[root@k8s-master01 ~]# mkdir -p /data/work && cd /data/work

## 下载工具,这里可以自己找也可以用我的

[root@k8s-master01 /data/work]# git clone https://gitea.xingzhibang.top/xingzhibang/k8s-cfssl.git

[root@k8s-master01 /data/work]# cd k8s-cfssl/

## 授予执行权限

[root@k8s-master01 /data/work/k8s-cfssl]# chmod +x *

[root@k8s-master01 /data/work/data/work/k8s-cfssl]# mv cfssl_linux-amd64 /usr/local/bin/cfssl

[root@k8s-master01 /data/work/data/work/k8s-cfssl]# mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

[root@k8s-master01 /data/work/data/work/k8s-cfssl]# mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

## 查看是否生效

[root@k8s-master03 /data/work]# cfssl version

Version: 1.2.0

Revision: dev

Runtime: go1.6

--- 3. 配置ca证书

[root@k8s-master01 /data/work]# cat ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Jiangsu",

"L": "Suzhou",

"O": "k8s",

"OU": "system"

}

],

"ca": {

"expiry": "87600h"

}

}

[root@k8s-master01 /data/work]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

## 执行完上条命令后你会发现生产了三个文件ca-csr.json ca-key.pem ca.pem

[root@k8s-master01 /data/work]# ls

ca.csr ca-csr.json ca-key.pem ca.pem

--- 4. 生成ca证书文件

[root@k8s-master01 /data/work]# cat ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

--- 5. 生成etcd 证书文件

## 配置etcd证书请求,hosts的ip变成自己etcd所在节点的ip

[root@k8s-master01 /data/work]# cat etcd-csr.json

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"10.0.0.161",

"10.0.0.162",

"10.0.0.163"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"ST": "Jiangsu",

"L": "Suzhou",

"O": "k8s",

"OU": "system"

}]

}

[root@k8s-master01 /data/work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

## 出现下面两个文件表示成功

[root@k8s-master01 /data/work]# ls etcd*.pem

etcd-key.pem etcd.pem

--- 6. 部署etcd 集群

## 把etcd-v3.4.13-linux-amd64.tar.gz上传到/data/work目录下

[root@k8s-master01 /data/work]# tar xf etcd-v3.4.13-linux-amd64.tar.gz

[root@k8s-master01 /data/work]# cp -p etcd-v3.4.13-linux-amd64/etcd* /usr/local/bin/

## 创建配置文件

[root@k8s-master01 /data/work]# vim etcd.conf

#[Member]

ETCD_NAME="etcd1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.0.161:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.161:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.161:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.161:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://10.0.0.161:2380,etcd2=https://10.0.0.162:2380,etcd3=https://10.0.0.163:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

## 创建工作目录

[root@k8s-master01 /data/work]# mkdir -p /var/lib/etcd/default.etcd

## 创建启动文件

[root@k8s-master01 /data/work]# cat /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd --cert-file=/etc/etcd/ssl/etcd.pem --key-file=/etc/etcd/ssl/etcd-key.pem --trusted-ca-file=/etc/etcd/ssl/ca.pem --peer-cert-file=/etc/etcd/ssl/etcd.pem --peer-key-file=/etc/etcd/ssl/etcd-key.pem --peer-trusted-ca-file=/etc/etcd/ssl/ca.pem --peer-client-cert-auth --client-cert-auth

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

--- 7. 将配置文件传到对应的目录下

[root@k8s-master01 /data/work]# cp ca*.pem /etc/etcd/ssl/

[root@k8s-master01 /data/work]# cp etcd*.pem /etc/etcd/ssl/

[root@k8s-master01 /data/work]# cp etcd.conf /etc/etcd/

[root@k8s-master01 /data/work]# cp etcd.service /usr/lib/systemd/system/etcd.service

## 验证

[root@k8s-master01 /data/work]# openssl x509 -noout -text -in /etc/etcd/ssl/etcd.pem | grep -A1 "Subject Alternative Name"

X509v3 Subject Alternative Name:

IP Address:127.0.0.1, IP Address:10.0.0.161, IP Address:10.0.0.162, IP Address:10.0.0.163

--- 8. 将配置文件传到其他两台master节点上

[root@k8s-master01 /data/work]# for i in k8s-master02 k8s-master03; do scp /data/work/ca*.pem root@$i:/etc/etcd/ssl/; done

[root@k8s-master01 /data/work]# for i in k8s-master02 k8s-master03; do scp /data/work/etcd*.pem root@$i:/etc/etcd/ssl/; done

[root@k8s-master01 /data/work]# for i in k8s-master02 k8s-master03; do scp /data/work/etcd.conf root@$i:/etc/etcd/; done

[root@k8s-master01 /data/work]# for i in k8s-master02 k8s-master03; do scp /data/work/etcd.service root@$i:/usr/lib/systemd/system/etcd.service; done

--- 9. 在k8s-master02修改etcd.conf 的配置文件,主要是NAME和IP

[root@k8s-master02 /etc/etcd]# cat etcd.conf

#[Member]

ETCD_NAME="etcd2"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.0.162:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.162:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.162:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.162:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://10.0.0.161:2380,etcd2=https://10.0.0.162:2380,etcd3=https://10.0.0.163:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

--- 10. 在k8s-master03修改etcd.conf 的配置文件,主要是NAME和IP

[root@k8s-master03 /etc/etcd]# cat etcd.conf

#[Member]

ETCD_NAME="etcd3"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.0.163:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.163:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.163:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.163:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://10.0.0.161:2380,etcd2=https://10.0.0.162:2380,etcd3=https://10.0.0.163:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

--- 11. 启动etcd 集群,先启动 k8s-master01 的 etcd 服务,会一直卡住在启动的状态,然后接着再启动 k8s-master02的 etcd,这样k8s-master01这个节点 etcd 才会正常起来

[root@k8s-master01 /data/work]# systemctl daemon-reload

[root@k8s-master02 /data/work]# systemctl daemon-reload

[root@k8s-master03 /data/work]# systemctl daemon-reload

[root@k8s-master01 /data/work]# systemctl enable --now etcd

[root@k8s-master02 /data/work]# systemctl enable --now etcd

[root@k8s-master03 /data/work]# systemctl enable --now etcd

--- 12. 查看集群状态

[root@k8s-master01 /data/work]# ETCDCTL_API=3 /usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://10.0.0.161:2379,https://10.0.0.162:2379,https://10.0.0.163:2379 endpoint health

+-------------------------+--------+-------------+-------+

| ENDPOINT | HEALTH | TOOK | ERROR |

+-------------------------+--------+-------------+-------+

| https://10.0.0.161:2379 | true | 17.330692ms | |

| https://10.0.0.162:2379 | true | 19.673519ms | |

| https://10.0.0.163:2379 | true | 33.705374ms | |

+-------------------------+--------+-------------+-------+注:--- 3. 配置ca证书

CN:Common Name(公用名称),kube-apiserver 从证书中提取该字段作为请求的用户名 (User Name); 浏览器使用该字段验证网站是否合法

O:Organization(单位名称),kube-apiserver 从证书中提取该字段作为请求用户所属的组 (Group); 对于 SSL 证书,一般为网站域名

L 字段:所在城市

S 字段:所在省份

C 字段:只能是国家字母缩写,如中国:CN

expiry字段:有效期

注: ---4. 生成ca证书文件

在hosts的字段字段下只写127.0.0.1和master的ip地址即可

注:---6. 创建配置文件etcd.conf 中

说明:

ETCD_NAME:节点名称,集群中唯一

ETCD_DATA_DIR:数据目录

ETCD_LISTEN_PEER_URLS:集群通信监听地址

ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址

ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址

ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址

ETCD_INITIAL_CLUSTER:集群节点地址

ETCD_INITIAL_CLUSTER_TOKEN:集群Token

ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群

4. 安装kubrenetes 组件

准备工作

下载kubenetes 二进制安装包:kubernetes 1.23

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.23.md#server-binaries

--- 1. 将二进制包上传至k8s-master01 服务器的/data/work目录下

## 解压

[root@k8s-master01 /data/work]# tar xf kubernetes-server-linux-amd64.tar.gz

## 进入bin目录下

[root@k8s-master01 /data/work]# cd kubernetes/server/bin

## 将二进制文件复制到/usr/local/bin/ 下

[root@k8s-master01 /data/work/kubernetes/server/bin]# cp kube-apiserver kube-controller-manager kube-scheduler kubectl /usr/local/bin/

## 通过scp 将四个文件分别传到其他两台master 的对应目录下

[root@k8s-master01 /data/work/kubernetes/server/bin]# for i in kube-apiserver kube-controller-manager kube-scheduler kubectl;do scp $i root@k8s-master02:/usr/local/bin/; done

[root@k8s-master01 /data/work/kubernetes/server/bin]# for i in kube-apiserver kube-controller-manager kube-scheduler kubectl;do scp $i root@k8s-master03:/usr/local/bin/; done

## 通过sco将kubelet kube-proxy传到node 节点的目录下

[root@k8s-master01 /data/work/kubernetes/server/bin]# scp kubelet kube-proxy root@k8s-node01:/usr/local/bin/

## 创建配置文件目录和证书目录

[root@k8s-master01 /data/work/kubernetes/server/bin]# mkdir -p /etc/kubernetes

[root@k8s-master01 /data/work/kubernetes/server/bin]# mkdir -p /etc/kubernetes/ssl

[root@k8s-master01 /data/work/kubernetes/server/bin]# mkdir -p /var/log/kubernetes4.1 部署kube-api-server组件

--- 1. 创建token.csv文件

[root@k8s-master01 /data/work]# cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

## 格式 token,用户名,UID,用户组

[root@k8s-master01 /data/work]# cat /etc/kubernetes/token.csv

00f723a2168ddfebce115c428d366149,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

--- 2. 创建csr请求文件,这里的IP要换成自己的IP

[root@k8s-master01 /data/work]# cat kube-apiserver-csr.json

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"10.0.0.161",

"10.0.0.162",

"10.0.0.163",

"10.0.0.164",

"10.0.0.200",

"192.168.0.1", ##一定要写上service的网段,踩坑了。

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Jiangsu",

"L": "Suzhou",

"O": "k8s",

"OU": "system"

}

]

}

--- 3. 生成证书

[root@k8s-master01 /data/work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver

--- 4. 创建api-sever的配置文件,替换成自己的IP

[root@k8s-master01 /data/work]# cat kube-apiserver.conf

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=10.0.0.161 \

--secure-port=6443 \

--advertise-address=10.0.0.161 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=192.168.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://10.0.0.161:2379,https://10.0.0.162:2379,https://10.0.0.163:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=4"

--- 5. 创建服务启动文件

[root@k8s-master01 /data/work]# cat kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

--- 6. 将配置文件cp到对应目录下

[root@k8s-master01 /data/work]# cp ca*.pem /etc/kubernetes/ssl/

[root@k8s-master01 /data/work]# cp kube-apiserver*.pem /etc/kubernetes/ssl/

[root@k8s-master01 /data/work]# cp token.csv /etc/kubernetes/

[root@k8s-master01 /data/work]# cp kube-apiserver.conf /etc/kubernetes/

[root@k8s-master01 /data/work]# cp kube-apiserver.service /usr/lib/systemd/system/

## 通过scp 将配置文件传到master02和master03上

[root@k8s-master01 /data/work]# scp ca*.pem k8s-master02:/etc/kubenetes/ssl/

[root@k8s-master01 /data/work]# scp ca*.pem k8s-master03:/etc/kubenetes/ssl/

[root@k8s-master01 /data/work]# scp kube-apiserver*.pem k8s-master02:/etc/kubenete/ssl/

[root@k8s-master01 /data/work]# scp kube-apiserver*.pem k8s-master03:/etc/kubenete/ssl/

[root@k8s-master01 /data/work]# scp token.csv k8s-master02:/etc/kubenetes/

[root@k8s-master01 /data/work]# scp token.csv k8s-master03:/etc/kubenetes/

[root@k8s-master01 /data/work]# scp kube-apiserver.conf k8s-master02:/etc/kubenetes/

[root@k8s-master01 /data/work]# scp kube-apiserver.conf k8s-master03:/etc/kubenetes/

[root@k8s-master01 /data/work]# scp kube-apiserver.service k8s-master02:/usr/lib/systemd/system/

[root@k8s-master01 /data/work]# scp kube-apiserver.service k8s-master03:/usr/lib/systemd/system/

--- 7. 在master02和master03上修改kube-apiserver.conf 中的ip地址为自己的主机ip地址

--- 8. 启动kube-apiserver

[root@k8s-master01 /data/work]# systemctl daemon-reload

[root@k8s-master02 /data/work]# systemctl daemon-reload

[root@k8s-master03 /data/work]# systemctl daemon-reload

[root@k8s-master01 /data/work]# systemctl enable --now kube-apiserver.service

[root@k8s-master02 /data/work]# systemctl enable --now kube-apiserver.service

[root@k8s-master03 /data/work]# systemctl enable --now kube-apiserver.service

--- 9. 验证

[root@k8s-master01 /data/work]# curl --insecure https://10.0.0.161:6443/

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}

## 上面看到401,这个是正常的的状态,还没认证 4.2 部署kubectl 组件

Kubectl 是客户端工具,操作k8s资源的,如增删改查等,主要用于与 Kubernetes API Server 交互

Kubectl 操作资源的时候,怎么知道连接到哪个集群,需要一个文件/etc/kubernetes/admin.conf,kubectl 会根据这个文件的配置,去访问k8s资源。/etc/kubernetes/admin.con 文件记录了访问的 k8s 集群和用到的证书。

kubectl 是管理员或开发者操作 Kubernetes 集群的“遥控器”,没有它几乎无法直接管理 Kubernetes。

--- 1. 创建 admin-csr证书文件

[root@k8s-master01 /data/work]#cat admin-csr.json

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Jiangsu",

"L": "Suzhou",

"O": "system:masters",

"OU": "system"

}

]

}

--- 2. 生成证书

[root@k8s-master01 /data/work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

[root@k8s-master01 /data/work]# cp admin*.pem /etc/kubernetes/ssl/

--- 3. 设置集群参数

[root@k8s-master01 /data/work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.0.0.161:6443 --kubeconfig=kube.config

### 查看kube.config

[root@k8s-master01 /data/work]# cat kube.config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data:

...

server: https://10.0.0.161:6443

name: kubernetes

contexts: null

current-context: ""

kind: Config

preferences: {}

users: null

--- 4. 设置客户端认证参数

[root@k8s-master01 /data/work]# kubectl config set-credentials admin --client-certificate=admin.pem --client-key=admin-key.pem --embed-certs=true --kubeconfig=kube.config

### 查看kube.config

[root@k8s-master01 /data/work]# cat kube.config

apiVersion: v1

clusters:

- cluster:

...

name: kubernetes

contexts: null

current-context: ""

kind: Config

preferences: {}

users:

- name: admin

user:

...

--- 5. 设置安全上下文参数

[root@k8s-master01 /data/work]# kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.config

### 查看kube.config

apiVersion: v1

clusters:

- cluster:

...

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: admin

name: kubernetes

current-context: ""

kind: Config

preferences: {}

users:

- name: admin

user:

...

--- 6. 这是当前上下文

[root@k8s-master01 /data/work]# kubectl config use-context kubernetes --kubeconfig=kube.config

### 查看kube.config

apiVersion: v1

clusters:

- cluster:

...

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: admin

name: kubernetes

current-context: kubernetes

kind: Config

preferences: {}

users:

- name: admin

user:

...

--- 7. 将配置文件kube.config mv 到root目录下,这样我们在执行kubectl,就会加载/root/.kube/config 文件,去操作k8s资源了

[root@k8s-master01 /data/work]# mkdir -p ~/.kube

[root@k8s-master01 /data/work]# cp kube.config ~/.kube/config

--- 8. 授权kubernetes 证书访问kubelet api 权限

[root@k8s-master01 /data/work]# kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes

clusterrolebinding.rbac.authorization.k8s.io/kube-apiserver:kubelet-apis created

--- 9. 验证集群状态

[root@k8s-master01 /data/work]# kubectl cluster-info

Kubernetes control plane is running at https://10.0.0.161:6443

[root@k8s-master01 /data/work]# kubectl get componentstatuses

## 这里的scheduler 和 controller-manager 报错是正常现象

[root@k8s-master01 /data/work]# kubectl get all --all-namespaces

--- 9. 同步kubectl 文件到其他节点

[root@k8s-master02 ~]# mkdir /root/.kube/

[root@k8s-master03 ~]# mkdir /root/.kube/

[root@k8s-master01 /data/work]# scp /root/.kube/config k8s-master02:/root/.kube/

[root@k8s-master01 /data/work]# scp /root/.kube/config k8s-master03:/root/.kube/

--- 10. 配置kubectl 子命令补全

[root@k8s-master01 /data/work]# yum install -y bash-completion

[root@k8s-master01 /data/work]# source /usr/share/bash-completion/bash_completion

[root@k8s-master01 /data/work]# source <(kubectl completion bash)

[root@k8s-master01 /data/work]# kubectl completion bash > ~/.kube/completion.bash.inc

[root@k8s-master01 /data/work]# source '/root/.kube/completion.bash.inc'

[root@k8s-master01 /data/work]# source $HOME/.bash_profile 4.3 部署kube-controller-manager组件

--- 1. 创建kube-controller-manager-csr.json 请求文件

[root@k8s-master01 /data/work]# cat kube-controller-manager-csr.json

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"10.0.0.161",

"10.0.0.162",

"10.0.0.163",

"10.0.0.161",

"10.0.0.200"

],

"names": [

{

"C": "CN",

"ST": "Jiangsu",

"L": "Suzhou",

"O": "system:kube-controller-manager",

"OU": "system"

}

]

}

--- 2. 生成证书

[root@k8s-master01 /data/work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

--- 3. 设置集群参数 (参考kubelet,内容不在重复)

[root@k8s-master01 /data/work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.0.0.161:6443 --kubeconfig=kube-controller-manager.kubeconfig

--- 4. 设置客户端认证参数

[root@k8s-master01 /data/work]# kubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.pem --client-key=kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig

--- 5. 设置安全上下文认证

[root@k8s-master01 /data/work]# kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

--- 6. 设置当前上下文认证

[root@k8s-master01 /data/work]# kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

--- 7. 创建配置文件 kube-controller-manager.conf

[root@k8s-master01 /data/work]# cat kube-controller-manager.conf

KUBE_CONTROLLER_MANAGER_OPTS="--port=0 \

--secure-port=10252 \

--bind-address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \

--service-cluster-ip-range=192.168.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--allocate-node-cidrs=true \

--cluster-cidr=172.16.0.0/16 \

--experimental-cluster-signing-duration=87600h \

--root-ca-file=/etc/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \

--leader-elect=true \

--feature-gates=RotateKubeletServerCertificate=true \

--controllers=*,bootstrapsigner,tokencleaner \

--horizontal-pod-autoscaler-sync-period=10s \

--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \

--use-service-account-credentials=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2"

--- 8. 创建启动文件 kube-controller-manager.service

[root@k8s-master01 /data/work]# cat kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

--- 9. 将配置文件移动到相关目录下

[root@k8s-master01 /data/work]# cp kube-controller-manager*.pem /etc/kubernetes/ssl

[root@k8s-master01 /data/work]# cp kube-controller-manager.kubeconfig /etc/kubernetes/

[root@k8s-master01 /data/work]# cp kube-controller-manager.conf /etc/kubernetes/

[root@k8s-master01 /data/work]# cp kube-controller-manager.service /usr/lib/systemd/system/

--- 10. 启动服务

[root@k8s-master01 /data/work]# systemctl daemon-reload

[root@k8s-master01 /data/work]# systemctl start kube-controller-manager.service

[root@k8s-master01 /data/work]# systemctl status kube-controller-manager.service

--- 11. 将配置文件移动到其他master节点

[root@k8s-master01 /data/work]# for node in k8s-master02 k8s-master03; do

scp kube-controller-manager*.pem ${node}:/etc/kubernetes/ssl/

scp kube-controller-manager.kubeconfig ${node}:/etc/kubernetes/

scp kube-controller-manager.conf ${node}:/etc/kubernetes/

scp kube-controller-manager.service ${node}:/usr/lib/systemd/system/

done

--- 12. 启动其他两台master

[root@k8s-master02 /data/work]# systemctl daemon-reload

[root@k8s-master02 /data/work]# systemctl start kube-controller-manager.service

[root@k8s-master03 /data/work]# systemctl daemon-reload

[root@k8s-master03 /data/work]# systemctl start kube-controller-manager.service4.4 部署kube-scheduler组件

--- 1. 创建kube-scheduler-csr.json 请求文件

[root@k8s-master01 /data/work]# cat kube-scheduler-csr.json

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"10.0.0.161",

"10.0.0.162",

"10.0.0.163",

"10.0.0.200"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Jiangsu",

"L": "Suzhou",

"O": "system:kube-scheduler",

"OU": "system"

}

]

}

--- 2. 生成证书

[root@k8s-master01 /data/work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

--- 3. 设置集群参数

[root@k8s-master01 /data/work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.0.0.161:6443 --kubeconfig=kube-scheduler.kubeconfig

--- 4. 设置客户端认证参数

[root@k8s-master01 /data/work]# kubectl config set-credentials system:kube-scheduler --client-certificate=kube-scheduler.pem --client-key=kube-scheduler-key.pem --embed-certs=true --kubeconfig=kube-scheduler.kubeconfig

--- 5. 设置安全上下文

[root@k8s-master01 /data/work]# kubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

--- 6. 设置当前上下文

[root@k8s-master01 /data/work]# kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

--- 7. 创建配置文件kube-scheduler.conf

[root@k8s-master01 /data/work]# cat kube-scheduler.conf

KUBE_SCHEDULER_OPTS="--address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \

--leader-elect=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2"

--- 8. 创建启动文件

[root@k8s-master01 /data/work]# cat kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

--- 9. 将文件移动到对应目录下

[root@k8s-master01 /data/work]# cp kube-scheduler*.pem /etc/kubernetes/ssl/

[root@k8s-master01 /data/work]# cp kube-scheduler.kubeconfig /etc/kubernetes/

[root@k8s-master01 /data/work]# cp kube-scheduler.conf /etc/kubernetes/

[root@k8s-master01 /data/work]# cp kube-scheduler.service /usr/lib/systemd/system/

--- 10.启动服务

[root@k8s-master01 /data/work]# systemctl daemon-reload

[root@k8s-master01 /data/work]# systemctl start kube-scheduler.service

[root@k8s-master01 /data/work]# systemctl status kube-scheduler.service

--- 11. 将配置文件移动到其他master节点

[root@k8s-master01 /data/work]# for node in k8s-master02 k8s-master03; do

scp kube-scheduler*.pem ${node}:/etc/kubernetes/ssl/

scp kube-scheduler.kubeconfig ${node}:/etc/kubernetes/

scp kube-scheduler.conf ${node}:/etc/kubernetes/

scp kube-scheduler.service ${node}:/usr/lib/systemd/system/

done

--- 12. 启动其他两台master节点

[root@k8s-master02 ~]# systemctl daemon-reload

[root@k8s-master02 ~]# systemctl start kube-scheduler.service

[root@k8s-master03 ~]# systemctl daemon-reload

[root@k8s-master03 ~]# systemctl start kube-scheduler.service 4.5 部署kubelet 组件

导入离线镜像包:pause-coredns

## 将pause-coredns 导入node节点

[root@k8s-node01 ~]# docker load -i pause-coredns.tar.gz kubelet: 每个Node节点上的kubelet定期就会调用API Server的REST接口报告自身状态,API Server 接收这些信息后,将节点状态信息更新到etcd中。kubelet也通过API Server监听Pod信息,从而对Node 机器上的POD进行管理,如创建、删除、更新Pod

### 以下部分操作需要在node节点上操作,请主要主机名哦

--- 1. 创建kubelet-bootstrap.kubeconfig

[root@k8s-master01 /data/work]# BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

## 你的token可能可我不一样,只要和token.csv 文件里的内容相同即可

[root@k8s-master01 /data/work]# echo $BOOTSTRAP_TOKEN

00f723a2168ddfebce115c428d366149

--- 2. 设置集群参数

[root@k8s-master01 /data/work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.0.0.161:6443 --kubeconfig=kubelet-bootstrap.kubeconfig

[root@k8s-master01 /data/work]# kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} --kubeconfig=kubelet-bootstrap.kubeconfig

--- 3. 设置安全上下文

[root@k8s-master01 /data/work]# kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

--- 4. 设置当前上下文

[root@k8s-master01 /data/work]# kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

--- 5. 创建绑定角色

[root@k8s-master01 /data/work]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

--- 6. 创建配置文件kubelet.json

### "cgroupDriver": "systemd"要和 docker 的驱动一致。

### 这里的address和clusterDNS 要改成自己的node ip

[root@k8s-master01 /data/work]# cat /etc/kubernetes/kubelet.json

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "10.0.0.164",

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"featureGates": {

"RotateKubeletServerCertificate": true

},

"clusterDomain": "cluster.local.",

"clusterDNS": ["192.168.0.2"]

}

--- 7. 创建启动文件

[root@k8s-master01 /data/work]# vim kubelet.service

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \

--cert-dir=/etc/kubernetes/ssl \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--config=/etc/kubernetes/kubelet.json \

--network-plugin=cni \

--pod-infra-container-image=k8s.gcr.io/pause:3.2 \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

--- 8. 在node节点创建文件

[root@k8s-node01 ~]# mkdir /etc/kubernetes/ssl -p

[root@k8s-node01 ~]# mkdir -p /var/lib/kubelet

[root@k8s-node01 ~]# mkdir -p /var/log/kubernetes

## 将master的工作文件通过scp 传到node节点上

[root@k8s-master01 /data/work]# scp kubelet-bootstrap.kubeconfig kubelet.json k8s-node01:/etc/kubernetes/

[root@k8s-master01 /data/work]# scp ca.pem k8s-node01:/etc/kubernetes/ssl/

[root@k8s-master01 /data/work]# scp kubelet.service k8s-node01:/usr/lib/systemd/system/

--- 9. 启动kubelet 服务

[root@k8s-node01 ~]# systemctl daemon-reload

[root@k8s-node01 ~]# systemctl start kubelet

[root@k8s-node01 ~]# systemctl status kubelet

---10. 在master 节点发送csr请求

[root@k8s-master01 /data/work]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR REQUESTEDDURATION CONDITION

node-csr-nvmolqEPBLxLPKyQ_FW9R2eA7y94a6zyb3OrqLyTF8s 47s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Pending

## 这里每个人的csr都一样的,后面需要跟上自己的

[root@k8s-master01 /data/work]# kubectl certificate approve node-csr-nvmolqEPBLxLPKyQ_FW9R2eA7y94a6zyb3OrqLyTF8s

## 再次查看的时候 最后一样的CONDITION 会从Pending 状态变为Approved,Issued

[root@k8s-master01 /data/work]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR REQUESTEDDURATION CONDITION

node-csr-nvmolqEPBLxLPKyQ_FW9R2eA7y94a6zyb3OrqLyTF8s 27m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Approved,Issued

## 查看 这里的STATUS为NotReady 为正常状态因为还没有配置网络插件

[root@k8s-master01 /data/work]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-node01 NotReady <none> 13s v1.23.174.6 部署kube-proxy组件

### kube-proxy 是部署在node节点上的,但是我们要用master节点来签发证书,然后将配置文件传到node节点即可

--- 1. 创建kube-proxy-csr.json请求文件

[root@k8s-master01 /data/work]# cat kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Jiangsu",

"L": "Suzhou",

"O": "k8s",

"OU": "system"

}

]

}

--- 2. 生成证书

[root@k8s-master01 /data/work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

--- 3. 设置集群参数

[root@k8s-master01 /data/work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.0.0.161:6443 --kubeconfig=kube-proxy.kubeconfig

--- 4. 设置客户端认证参数

[root@k8s-master01 /data/work]# kubectl config set-credentials kube-proxy --client-certificate=kube-proxy.pem --client-key=kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

--- 5. 设置安全上下文

[root@k8s-master01 /data/work]# kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

--- 6. 设置当前上下文

[root@k8s-master01 /data/work]# kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

--- 7. 创建kube-proxy 配置文件

## 这里的IPD要改成自己node节点的IP

[root@k8s-master01 /data/work]# cat /etc/kubernetes/kube-proxy.yaml

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 10.0.0.164

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 10.0.0.0/24

healthzBindAddress: 10.0.0.164:10256

kind: KubeProxyConfiguration

metricsBindAddress: 10.0.0.164:10249

mode: "ipvs"

---- 8. 创建服务启动文件

[root@k8s-master01 /data/work]# cat /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

--- 9. 将配置文件传到node节点上

[root@k8s-master01 /data/work]# scp kube-proxy.kubeconfig kube-proxy.yaml

k8s-master01:/etc/kubernetes/

[root@k8s-master01 /data/work]# scp kube-proxy.service

k8s-master01:/usr/lib/systemd/system/

---- 10. 在node节点启动服务

[root@k8s-node01 ~]# mkdir -p /var/lib/kube-proxy

[root@k8s-node01 ~]# systemctl daemon-reload

[root@k8s-node01 ~]# systemctl start kube-proxy.service

[root@k8s-node01 ~]# systemctl enable kube-proxy.service

5. 安装网络插件

5.1 部署calico 组件

calico离线镜像包:calico.tar.gz

## 将镜像包上传到k8s-node01 节点上

--- 1. 导入镜像

[root@k8s-node01 ~]# docker load -i calico.tar.gz

--- 2. 下载calico 的yaml文件

## 在我的代码仓库里找.

[root@k8s-node01 ~/cni-calico]# git clone https://gitea.xingzhibang.top/xingzhibang/cni-calico.git

## 主要修改的参数

[root@k8s-master01 /data/work]# vim calico.yaml

- name: CALICO_IPV4POOL_CIDR

value: "10.0.0.0/16" # Pod 网段

- name: IP_AUTODETECTION_METHOD

value: "interface=eth0" # 指定网卡名称

[root@k8s-master01 /data/work]# kubectl apply -f calico.yaml

## 等待pod状态为running

[root@k8s-master01 /data/work]# kubectl get pod -A

[root@k8s-master01 /data/work]# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-677cd97c8d-vdshw 1/1 Running 0 23m

kube-system calico-node-9jbjr 1/1 Running 0 23m

## 此时node节点的状态为ready

[root@k8s-master01 /data/work]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-node01 Ready <none> 8d v1.23.175.2 部署coredns 组件

--- 1. 下载coredns的yaml文件

[root@k8s-node01 ~/cni-calico]# git clone https://gitea.xingzhibang.top/xingzhibang/cni-calico.git

## 修改yaml文件

[root@k8s-master01 /data/work]# grep clusterIP coredns.yaml

clusterIP: 192.168.0.2 ## 这里的是在kubelet中定义好的DNSIP

[root@k8s-master01 /data/work]# kubectl apply -f coredns.yaml

[root@k8s-master01 /data/work]# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-677cd97c8d-vdshw 1/1 Running 0 36m

kube-system calico-node-9jbjr 1/1 Running 0 36m

kube-system coredns-86d879486-s7g7k 1/1 Running 0 2m9s

[root@k8s-master01 /data/work]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 192.168.0.2 <none> 53/UDP,53/TCP,9153/TCP 5m20s6. 测试K8S 集群

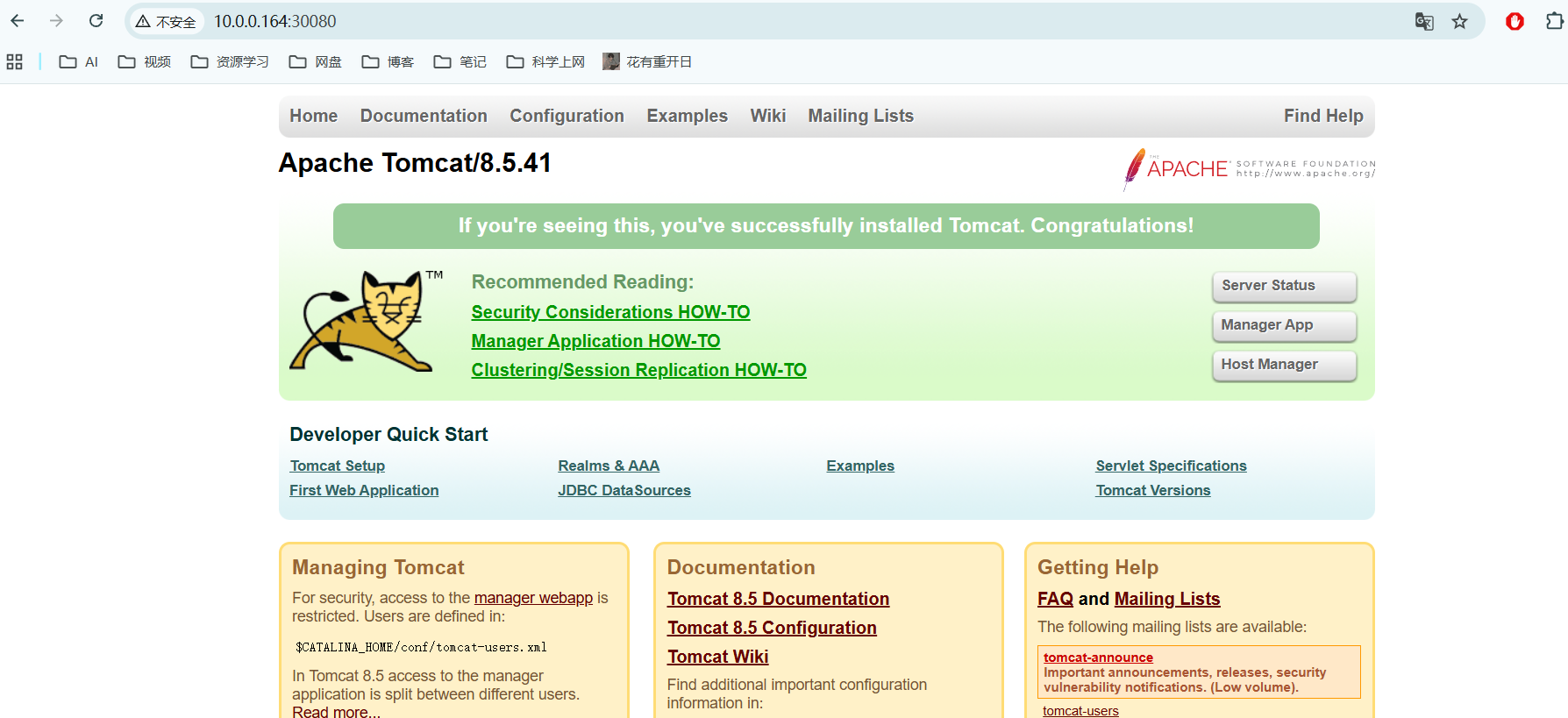

6.1 部署tomcat 服务

[root@k8s-master01 /data/work]# cat tomcat.yaml

apiVersion: v1 #pod属于k8s核心组v1

kind: Pod #创建的是一个Pod资源

metadata: #元数据

name: demo-pod #pod名字

namespace: default #pod所属的名称空间

labels:

app: myapp #pod具有的标签

env: dev #pod具有的标签

spec:

containers: #定义一个容器,容器是对象列表,下面可以有多个name

- name: tomcat-pod-java #容器的名字

ports:

- containerPort: 8080

image: tomcat:8.5-jre8-alpine #容器使用的镜像

imagePullPolicy: IfNotPresent

- name: busybox

image: busybox:latest

command: #command是一个列表,定义的时候下面的参数加横线

- "/bin/sh"

- "-c"

- "sleep 3600"

[root@k8s-master01 /data/work]# kubectl apply -f tomcat.yaml

[root@k8s-master01 /data/work]# kubectl get pod

NAME READY STATUS RESTARTS AGE

demo-pod 2/2 Running 0 5m56s

[root@k8s-master01 /data/work]# cat tomcat-service.yaml

apiVersion: v1

kind: Service

metadata:

name: tomcat

spec:

type: NodePort

ports:

- port: 8080

nodePort: 30080

selector:

app: myapp

env: dev

[root@k8s-master01 /data/work]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 11d

tomcat NodePort 192.168.184.9 <none> 8080:30080/TCP 4m22s浏览器访问node节点的ip:30080

6.2 测试coredns是否正常

[root@k8s-master01 /data/work]# kubectl run busybox --image=busybox:1.28 --restart=Never -it --rm -- sh

## ping 百度正常的

/ # ping www.baidu.com

PING www.baidu.com (153.3.238.127): 56 data bytes

64 bytes from 153.3.238.127: seq=0 ttl=127 time=11.085 ms

64 bytes from 153.3.238.127: seq=1 ttl=127 time=11.178 ms

64 bytes from 153.3.238.127: seq=2 ttl=127 time=11.357 ms

## 用nslookup查看 tomcat是通的

/ # nslookup tomcat.default.svc.cluster.local

Server: 192.168.0.2

Address 1: 192.168.0.2 kube-dns.kube-system.svc.cluster.local

Name: tomcat.default.svc.cluster.local

Address 1: 192.168.184.9 tomcat.default.svc.cluster.local

7. 安装keepalived +Nginx 实现高可用

7.1 部署nginx 主 备

## 这里的k8s-master01和k8s-master01 都要操作

--- 1. 配置nginx的yum源

[root@k8s-master02 ~]# cat /etc/yum.repos.d/nginx.repo

[nginx-stable]

name=nginx stable repo

baseurl=http://nginx.org/packages/centos/$releasever/$basearch/

gpgcheck=1

enabled=1

gpgkey=https://nginx.org/keys/nginx_signing.key

module_hotfixes=true

--- 2. 安装nginx和keepalived

[root@k8s-master01 ~]# yum install nginx

--- 3. 修改配置文件

[root@k8s-master01 ~]# cat /etc/nginx/nginx.conf

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

# 四层负载均衡,为两台Master apiserver组件提供负载均衡

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 10.0.0.161:6443; # Master1 APISERVER IP:PORT

server 10.0.0.162:6443; # Master2 APISERVER IP:PORT

}

server {

listen 16443; # 由于nginx与master节点复用,这个监听端口不能是6443,否则会冲突

proxy_pass k8s-apiserver;

}

}

http {

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

include /etc/nginx/mime.types;

default_type application/octet-stream;

server {

listen 80 default_server;

server_name _;

location / {

}

}

}7.2 部署keepalived 主备

--- 1. 安装keepalived 主备都要

[root@k8s-master01 ~]# yum install keepalived

--- 2. 修改keepalived 配置文件

## 主keepalived

[root@k8s-master01 ~]# cat /etc/keepalived/keepalived.conf

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

}

vrrp_instance VI_1 {

state MASTER

interface eth0 # 修改为实际网卡名

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 100 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

# 虚拟IP

virtual_ipaddress {

10.0.0.200/24

}

track_script {

check_nginx

}

}

## 备用keepalibed

[root@k8s-master02 ~]# cat /etc/keepalived/keepalived.conf

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_BACKUP

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

}

vrrp_instance VI_1 {

state BACKUP

interface eth0 # 修改为实际网卡名

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 90 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

# 虚拟IP

virtual_ipaddress {

10.0.0.200/24

}

track_script {

check_nginx

}

}

--- 3. nginx的检查脚本,主备都需要

[root@k8s-master01 ~]# cat /etc/keepalived/check_nginx.sh

#!/bin/bash

#1、判断Nginx是否存活

counter=`ps -C nginx --no-header | wc -l`

if [ $counter -eq 0 ]; then

#2、如果不存活则尝试启动Nginx

service nginx start

sleep 2

#3、等待2秒后再次获取一次Nginx状态

counter=`ps -C nginx --no-header | wc -l`

#4、再次进行判断,如Nginx还不存活则停止Keepalived,让地址进行漂移

if [ $counter -eq 0 ]; then

service keepalived stop

fi

fi

--- 4. 启动服务

[root@k8s-master01 ~]# systemctl daemon-reload

[root@k8s-master02 ~]# systemctl daemon-reload

[root@k8s-master01 ~]# systemctl enable --now nginx

[root@k8s-master02 ~]# systemctl enable --now nginx

[root@k8s-master01 ~]# systemctl enable --now keepalived

[root@k8s-master02 ~]# systemctl enable --now keepalived

--- 5. 测试vip

## 会有一个10.0.0.200 的虚拟ip

[root@k8s-master01 /data/work]# ip a

## 停掉k8s-master01的keepalived。vip会漂移到k8s-master02上面目前所有的Worker Node组件连接都还是xianchaomaster1 Node,如果不改为连接VIP走负载均衡 器,那么Master还是单点故障。

因此接下来就是要改所有Worker Node(kubectl get node命令查看到的节点)组件配置文件,由 原来10.0.0.161:6443修改为10.0.0.200:16443(VIP)

[root@k8s-node01 /etc/kubernetes]# sed -i 's#10.0.0.161:6443#10.0.0.200:16443#g' kubelet-bootstrap.kubeconfig

[root@k8s-node01 /etc/kubernetes]# sed -i 's#10.0.0.161:6443#10.0.0.200:16443#g' kubelet.json

[root@k8s-node01 /etc/kubernetes]# sed -i 's#10.0.0.161:6443#10.0.0.200:16443#g' kubelet.kubeconfig

[root@k8s-node01 /etc/kubernetes]# sed -i 's#10.0.0.161:6443#10.0.0.200:16443#g' kube-proxy.yaml

[root@k8s-node01 /etc/kubernetes]# sed -i 's#10.0.0.161:6443#10.0.0.200:16443#g' kube-proxy.kubeconfig

[root@k8s-node01 /etc/kubernetes]# systemctl restart kubelet.service

[root@k8s-node01 /etc/kubernetes]# systemctl restart kube-proxy.service

评论